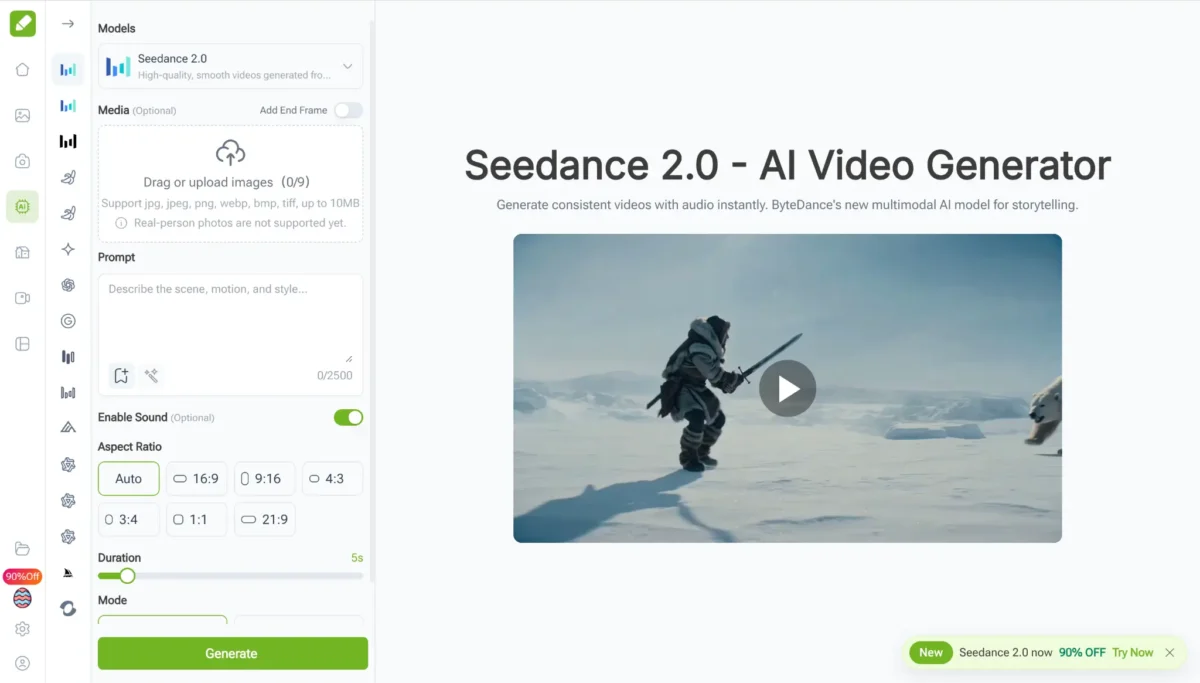

Independent filmmaking in high-energy music videos consistently reveals the gaps in generative AI. Although the industry has been heavily discussing it for the past year, many tools remain closer to experimental toys than professional equipment. When you need a subject to perform a complex dance move or capture the explosive spray of water in slow motion, most models simply fall apart, turning the frame into a soup of shifting pixels. My workflow changed significantly when I began using Seedance 2.0 on VisualGPT to handle the heavy lifting of my visual effects and b-roll generation. By integrating the latest generation of video models, this platform has solved the most persistent issues in digital motion creation.

The Evolution of Movement: High Dynamic Motion

The most significant pain point for any professional creator is the “micro-motion” trap. Most AI video tools are only capable of subtle movements like a person blinking or a slight camera pan. When I started testing Seedance 2.0 on VisualGPT, I specifically looked for its ability to handle High Dynamic Motion. I prompted a scene featuring a breakdancer performing a rapid headspin on a neon-lit street.

In any other environment, the dancer’s limbs would have blurred into the background or morphed into different shapes. However, Seedance 2.0 on VisualGPT maintained incredible anatomical consistency. The model supports large-scale body movements and complex physical shifts that feel weighted and intentional. For a director, this means the ability to create action-oriented content—from parkour sequences to high-speed chases—without the typical AI “hallucinations” that ruin the immersion.

Physical Law Adherence: Why Realism Matters

One of the quickest ways to spot a fake video is through its physics. If a character runs and their hair doesn’t flow correctly, or if light doesn’t reflect off a moving car in a logical way, the viewer immediately loses interest. This is where the engineering behind Seedance 2.0 on VisualGPT sets a new industry standard.

Mastering Fluid Dynamics and Gravity

I conducted a series of “stress tests” involving fluid dynamics, such as pouring liquid into a glass or capturing a heavy rainstorm. Most models struggle with the “liquification” effect, where textures seem to melt into each other. Seedance 2.0 on VisualGPT demonstrates a strict adherence to physical laws. When water splashes, it follows a realistic trajectory governed by gravity and momentum. There is a distinct lack of the “shimmering” or “flickering” effect that usually plagues AI videos, making the final output look like it was shot on a physical set rather than generated in a cloud server.

Light, Shadow, and Environmental Consistency

Beyond just movement, physics includes how light interacts with the world. In my tests involving a moving light source—a flickering torch in a dark cave—the shadows cast by the environment stayed perfectly synchronized with the flame’s position. This level of physical grounding is essential for professional-grade cinematography where light and shadow are used to tell a story.

Directing the Virtual Camera: Lens Sense and Depth

What truly separates a hobbyist tool from a professional one is the degree of control over the “camera.” Seedance 2.0 on VisualGPT exhibits an advanced “camera consciousness” that mimics the behavior of real-world cinema equipment.

Multi-Lens Perspective and Bokeh

When I requested a “dolly zoom” effect (the famous Hitchcock zoom), the model understood the complex relationship between the focal length and the background magnification. It didn’t just zoom in; it shifted the perspective of the entire scene while keeping the subject stable. The depth of field was equally impressive—as the subject moved closer to the “lens,” the background bokeh blurred naturally, simulating the optical characteristics of a high-end 35mm prime lens.

Superior Semantic Understanding

Because this model is built on an incredibly deep semantic foundation, its understanding of complex prompts is a “reduction in complexity” for the user. I found that I could use abstract cultural references or highly specific technical jargon, and the model would translate those into visual reality with high fidelity. Whether it’s the specific texture of ancient silk or the gritty atmosphere of a 1970s noir film, Seedance 2.0 on VisualGPT captures the nuance of the prompt without requiring dozens of revisions.

Conclusion

Seedance 2.0 (https://visualgpt.io/ai-models/seedance-2) on VisualGPT have effectively bridged the gap between high-end digital production and accessible AI technology. By focusing on the high dynamic motion that creators actually need and maintaining a strict adherence to the physical laws of our world, this combination provides a level of cinematic quality that was previously unreachable. For any filmmaker or content creator looking to push the boundaries of what is possible in digital storytelling, this platform offers the most robust and professional toolset on the market today. It transforms the generative process from a game of chance into a precise, directorial experience.

Try on https://visualgpt.io/ai-models/seedance-2.

Also Read

- Innovative Technologies in Metalworking Equipment

- How to Find Grants in Michigan: A Practical Guide for Nonprofits, Businesses, and Individuals

- 4 Important Steps to Start Your Digital Marketing Agency

Leave a Comment